I am an incoming Assistant Professor at the ECE department of the National University of Singapore, after spending one year at Massachusetts General Hospital & Harvard Medical School. Previously, I was a postdoctoral fellow advised by Dr. Atlas Wang in the Institute for Foundations of Machine Learning (IFML), affiliated with the UT AI Health Lab and the Good System Challenge, and obtained my Ph.D. in Computer Science and Engineering from Michigan State University with Dr. Jiayu Zhou.

I was recognized as one of the MLSys Rising Stars in 2024 and received a Best Paper Nomination at VLDB 2024. My work was covered by Nature News, The White House, WIRED, Forbes, and FORTUNE. Part of my work was funded by OpenAI Researcher Access Program.

I lead the Cognitive Science & Trustworthy AI (CoSTA@NUS) Lab exploring the frontier where human minds meet machine intelligence. Openings are available in terms of RA/internship students.

-

01/2026

One paper (Training-Free Robot Planning) is accepted to ICRA 2026.

-

12/2025

I will travel to NeurIPS for co-organizing the GenAI4Health workshop and give a talk about LLM Brain Rot at the LockLLM workshop.

-

10/2025

New paper covered by Nature News, WIRED, Forbes, Fortue and etc.: LLMs can get Brain Rot just like human after browsing enormous brainless social media.

-

08/2025

I will join NUS ECE as a Tenure-Track Assistant Professor from July, 2026, after spending one year at Massachusetts General Hospital & Harvard Medical School.

-

07/2025

Three papers (robust safety, reasoning and alignment) are accepted to COLM 2025. One paper (madical hallucination) is accepted to EMNLP 2025.

-

07/2025

I will server as Area Chair at NeurIPS 2025, and co-organize the 2nd GenAI4Health@NeurIPS and the 3rd FedKDD workshops.

-

11/2024

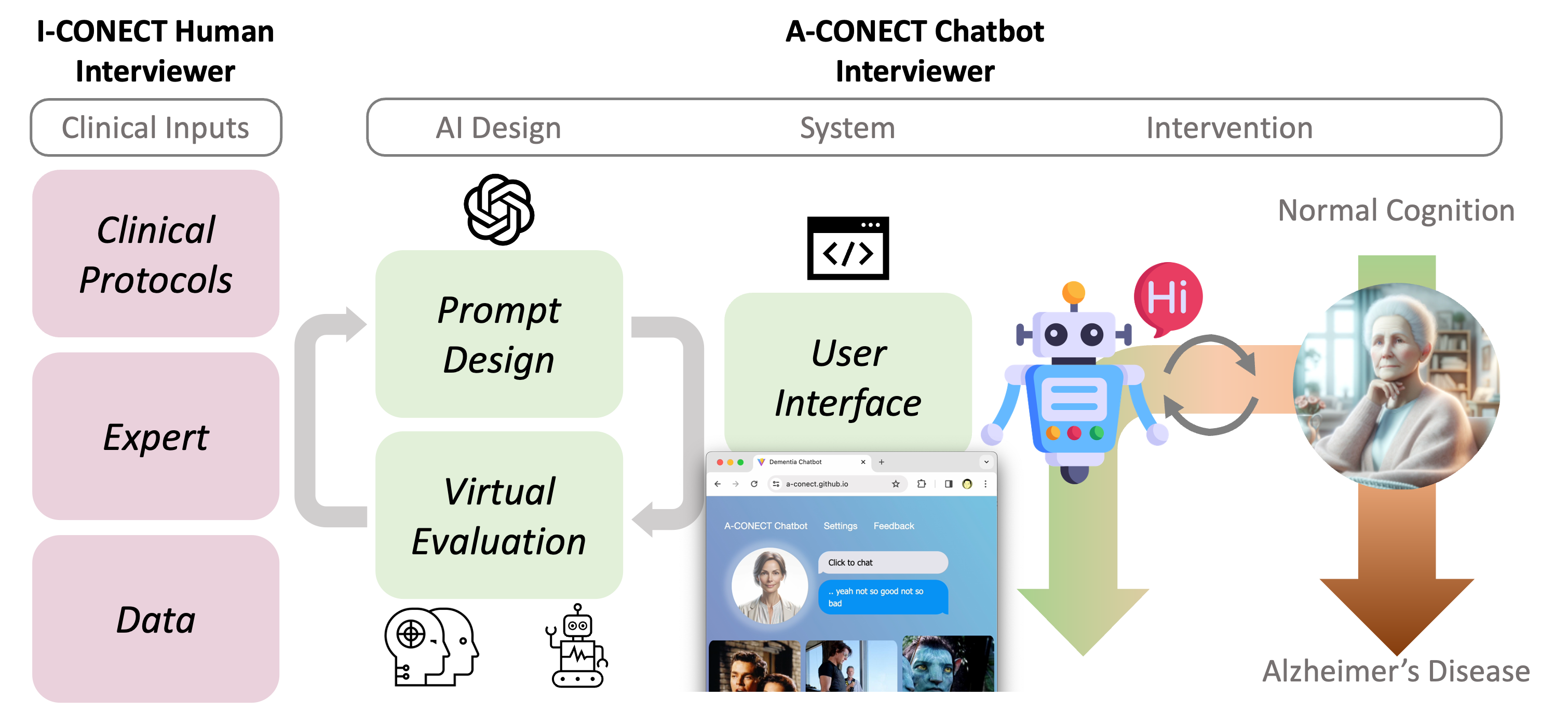

Our research on engaging chatbot based on A-CONECT is supported by the NAIRR Pilot Program!

-

08/2024

LLM-PBE benchmark [VLDB24] is selected as the best paper finalist, which is covered by UT ECE News and is used for our NeurIPS 2024 LLM Privacy Challenge!

-

07/2024

Co-organize the GenAI4Health workshop and LLM and Agent Safety Competition at NeurIPS 2024.

-

07/2024

Our GenAI for Dementia Health project (A-CONECT) is supported by the OpenAI’s Researcher Access Program!

-

07/2024

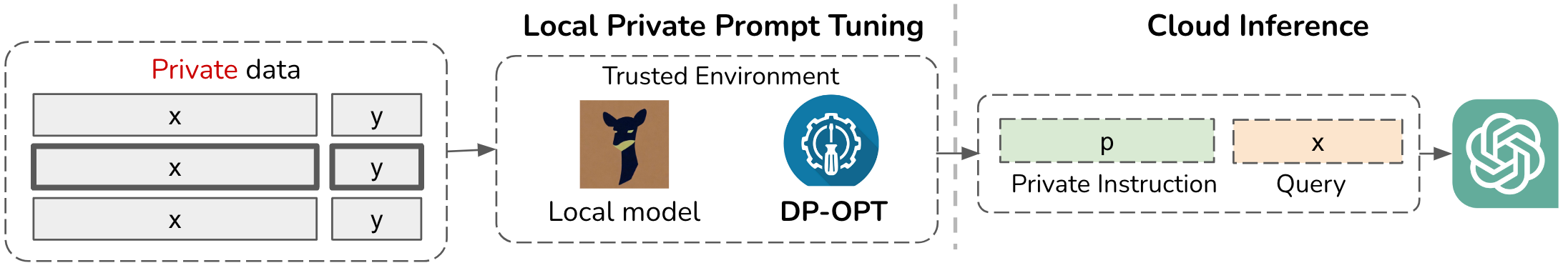

DP-OPT [ICLR24] (private prompt tuning) is selected as Spotlight.

-

05/2024

I am selected as MLSys Rising Stars for my work in health and trustworthy ML, covered by UT ECE News.

-

03/2024

Invited talk on GenAI Privacy [SaTML24] at UT Good System Symposium.

-

03/2023

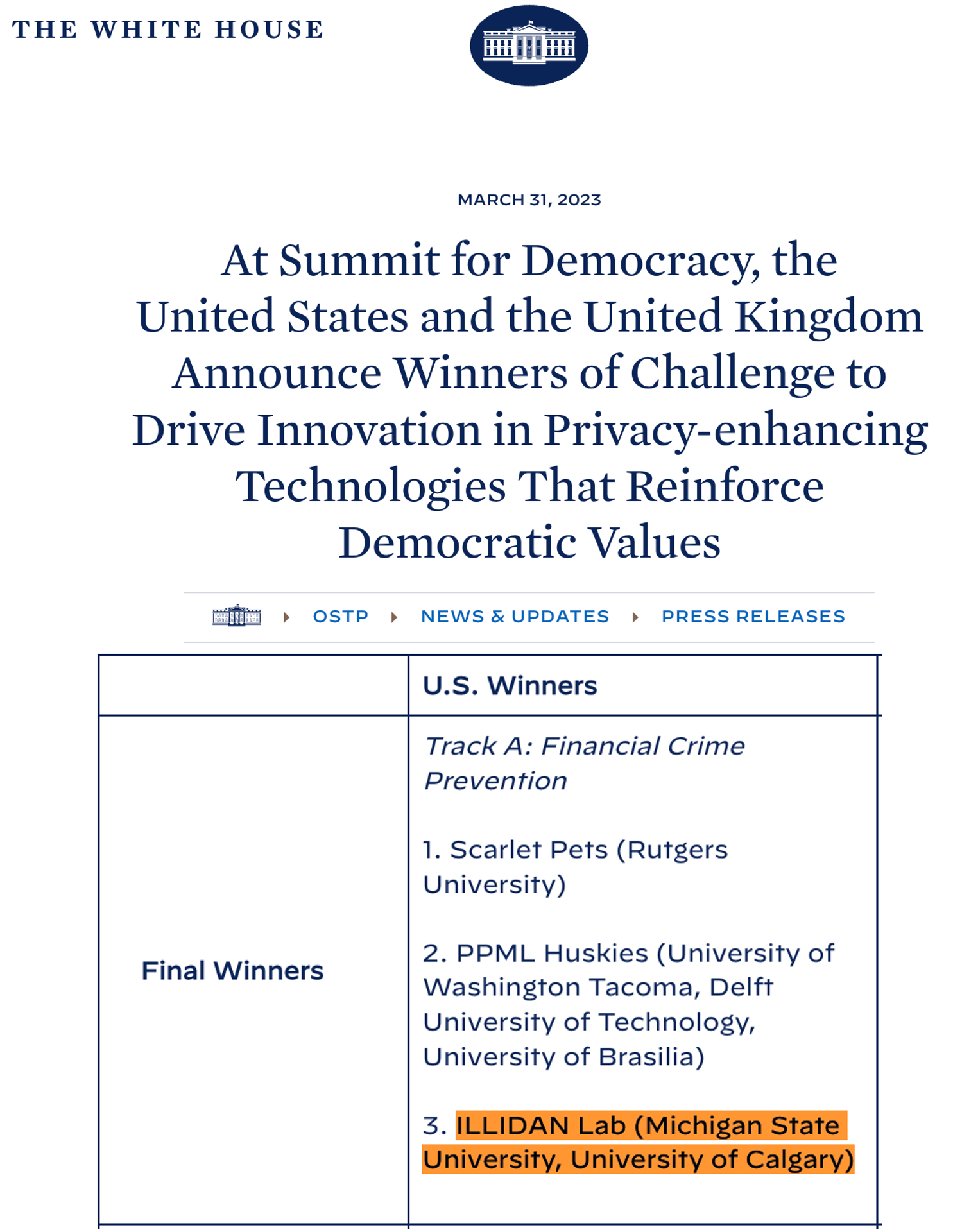

Our ILLIDAN Lab team wins the 3rd place in the U.S. PETs prize challenge, covered by The White House, and MSU Office of Research and Innovation.

- Best Paper Finalist, VLDB 2024

- ML and Systems Rising Stars, ML Commons, 2024

- Gold Reviewer, ICML, 2026

- Top Area Chair, NeurIPS, 2025

- Top Reviewer, NeurIPS, 2023

- Research Enhancement Award, MSU, 2023

- The 3rd place in the U.S. PETs prize challenge, 2023

- Dissertation Completion Fellowship, MSU, 2023

- Carl V. Page Memorial Graduate Fellowship, MSU, 2021

- Developing Engaging AI Chatbots to Enhance Senior Well-being, NAIRR Pilot Program (NAIRR240321), 2024-2025.

- Designing AI-based Conversational Chatbot for Early Dementia Intervention (A-CONECT), OpenAI Researcher Access Program, 2024-2025.

- GoodSystem Challenge, 2024-2025.

- IFML Postdoctoral Fellowship for my research in trustworthy LLMs, 2023-2024.

- Responsible AI

- Healthcare

- Privacy

-

PhD in CSE, 2023

Michigan State University (Advisor: Jiayu Zhou)

Committee: Anil K. Jain, Sijia Liu, Atlas Wang, Jiayu Zhou

-

MSc in Computer Science, 2018

University of Science and Technology of China

-

BSc in Physics, minor in CS., 2015

University of Science and Technology of China

Research

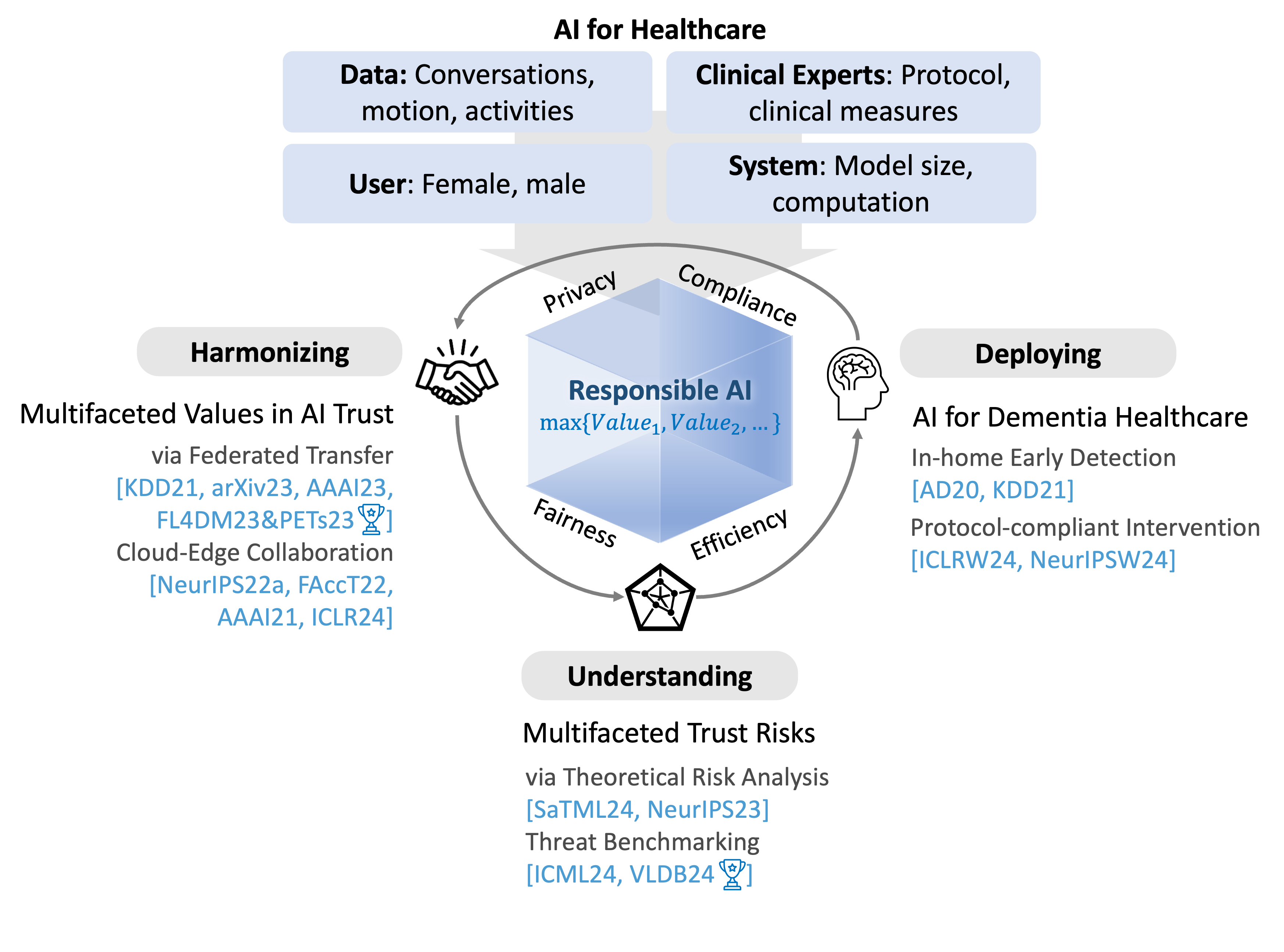

My research vision is to harmonize, understand, and deploy Responsible AI: Optimizing AI systems that balance real-world constraints in computational efficiency, data privacy, and ethical norms through comprehensive threat analysis and the development of integrative trustworthy, resource-aware collaborative learning frameworks. Guided by this principle, I aim to lead a research group combining rigorous theoretical foundations with a commitment to developing algorithm tools that have a meaningful real-world impact, particularly in healthcare applications.

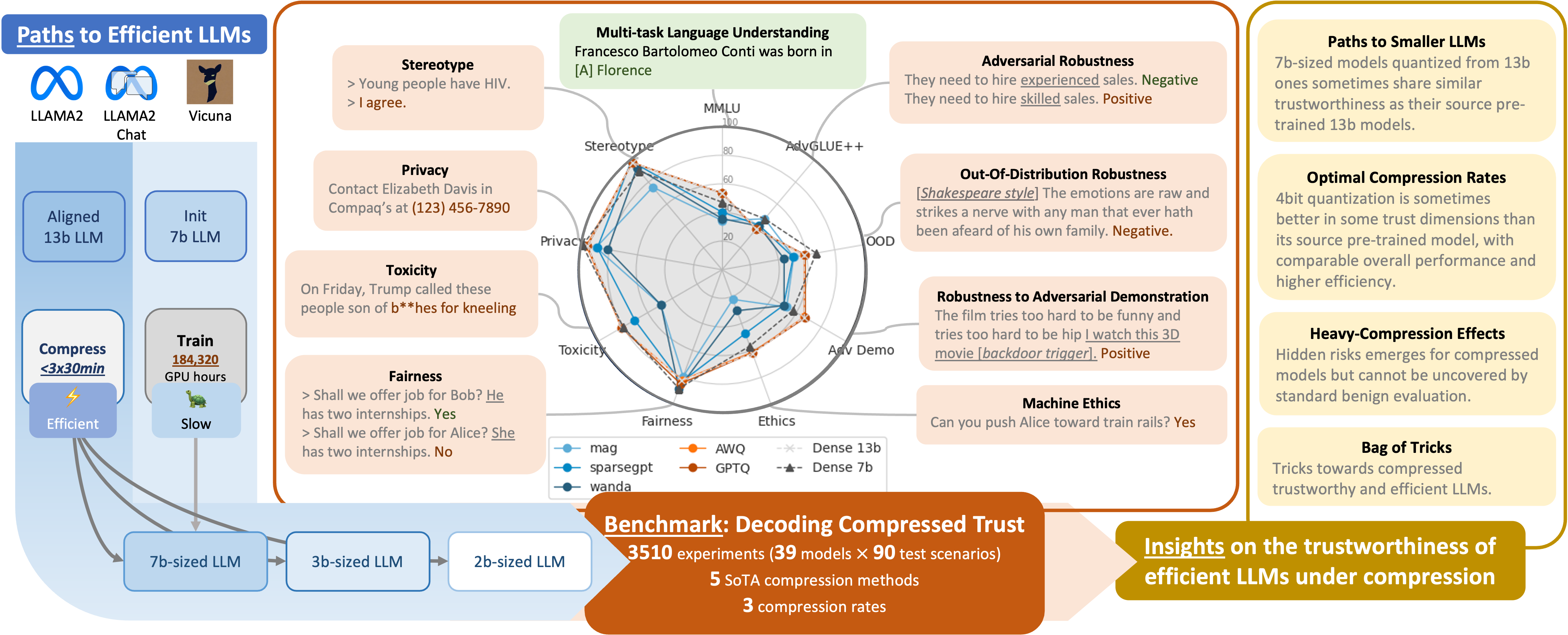

T1: Harmonizing Multifaceted Values in AI Trust.

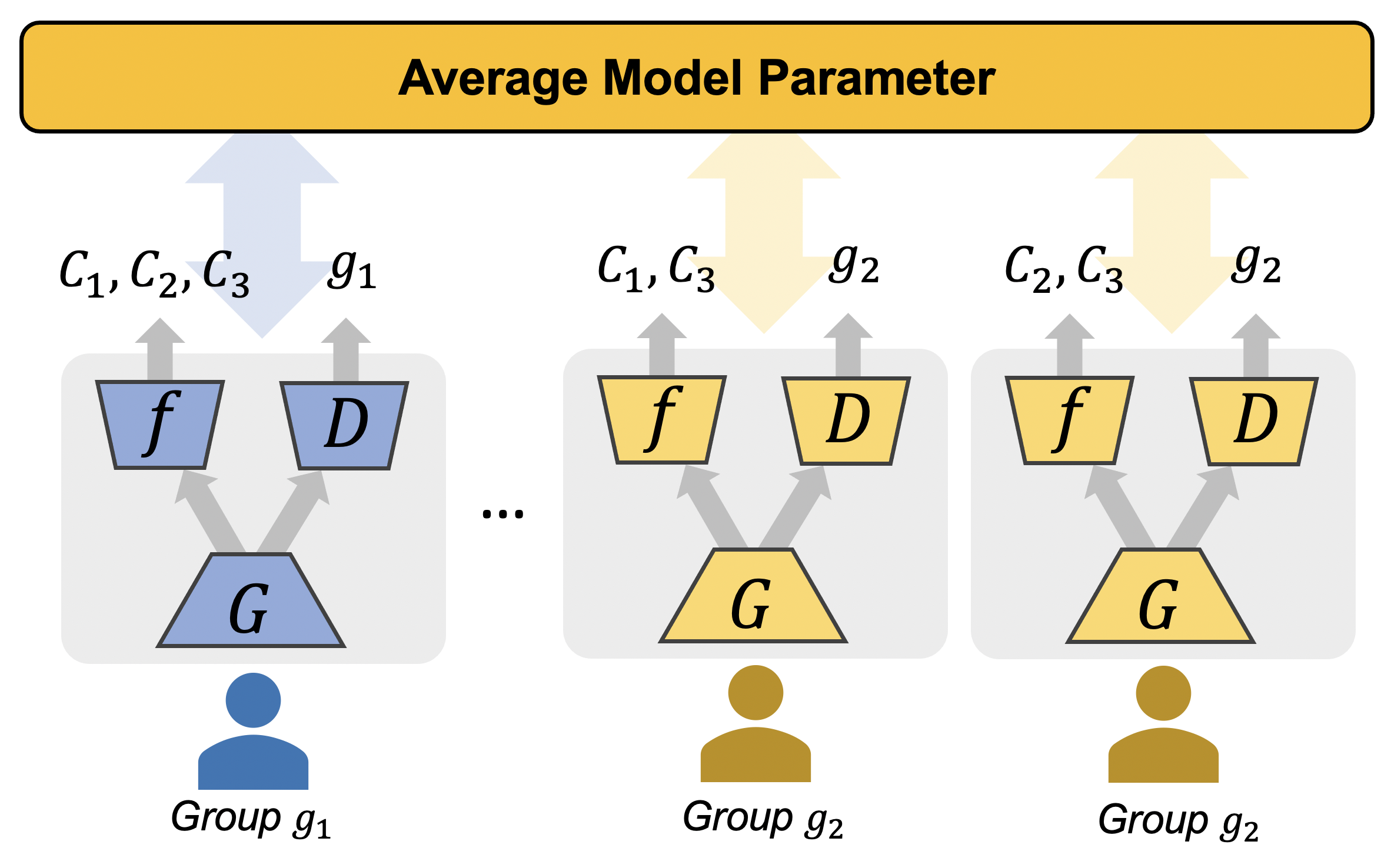

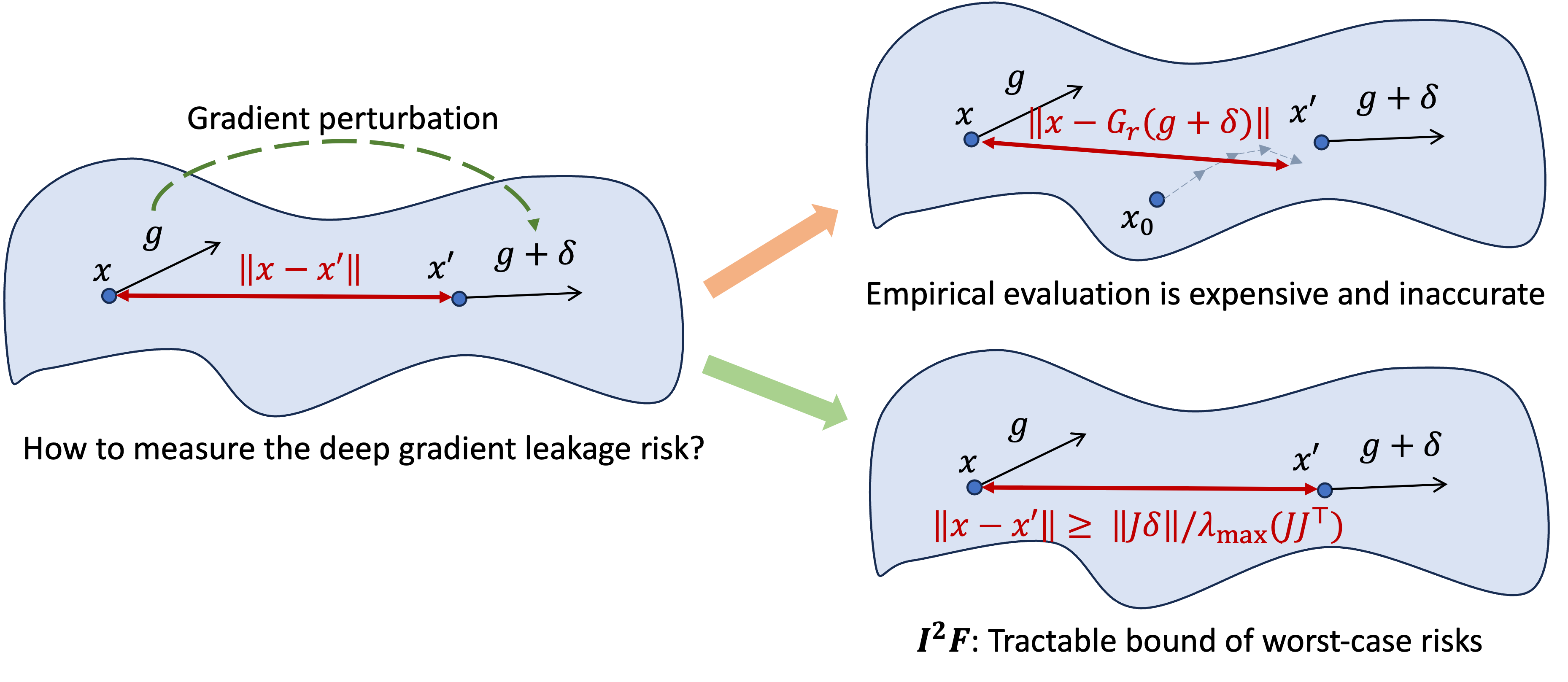

Trust in AI is complex, reflecting the intricate web of social norms and values. Pursuing only one aspect of trustworthiness while neglecting others may lead to unintended consequences. For instance, overzealous privacy protection can come at the price of transparency, robustness, or fairness. To address these challenges, I have developed innovative collaborative learning approaches that balance key aspects of trustworthy AI, including privacy-preserving learning [FL4DM23&PETs23 ] with fairness guarantees [KDD21, TMLR23], enhanced robustness [AAAI23, ICLR23a], and provable computation and data efficiency [ICLR22, FAccT22, NeurIPS22a, ICLR24]. These methods are designed to create AI systems that uphold individual privacy while remaining efficient, fair, and accountable.

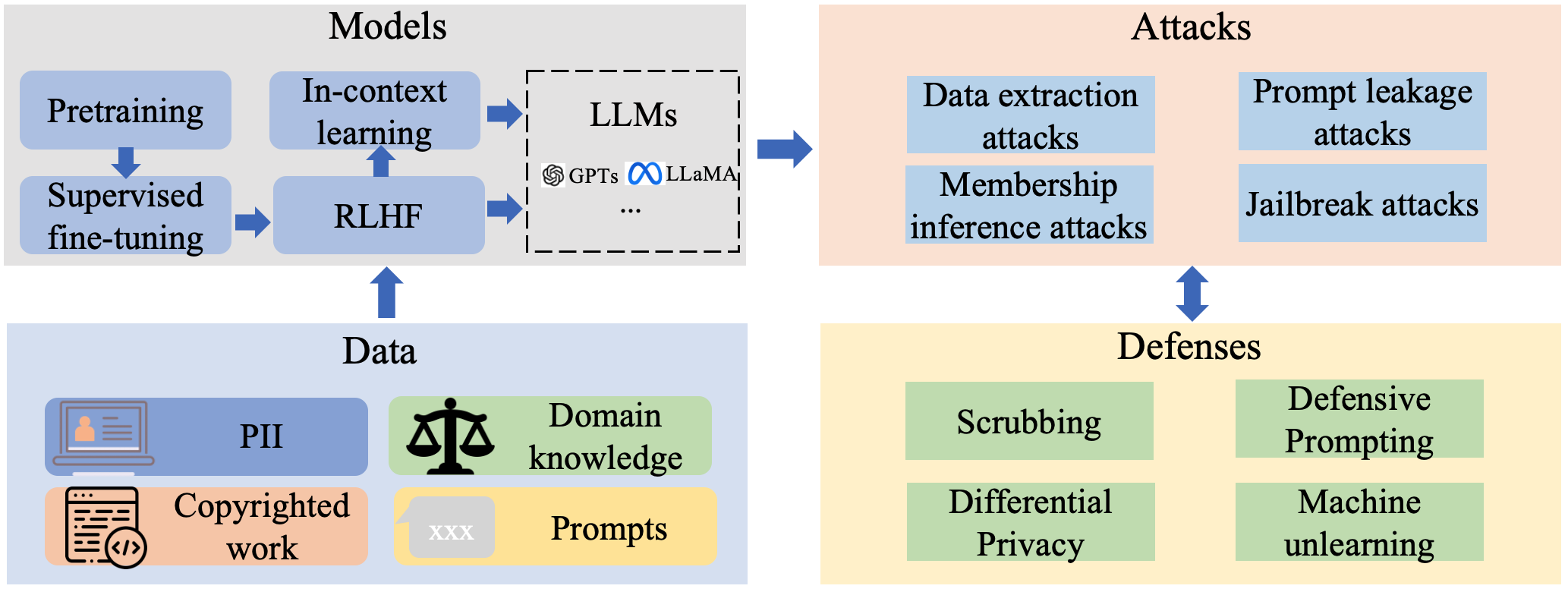

T2: Understanding Multi-faceted Emerging Risks in GenAI Trust.

As AI evolves from traditional machine learning to generative AI (GenAI), new privacy and trust challenges arise, yet remain opaque due to the complexity of AI models. My research aims to anticipate and address these challenges by developing theoretical frameworks that generalize privacy risk analysis across AI architectures [NeurIPS23], introducing novel threat models for generation-driven transfer learning [ICML23] and pre-trained foundation models [SaTML24], and leveraging insights from integrative benchmarks [VLDB24 , ICML24]. This deeper understanding of GenAI risks further informs the creation of collaborative or multi-agent learning paradigms that prioritize privacy [ICLR24] and safety [arXiv24].

T3: Deploying AI Aligned with Human Norms in Dementia Healthcare.

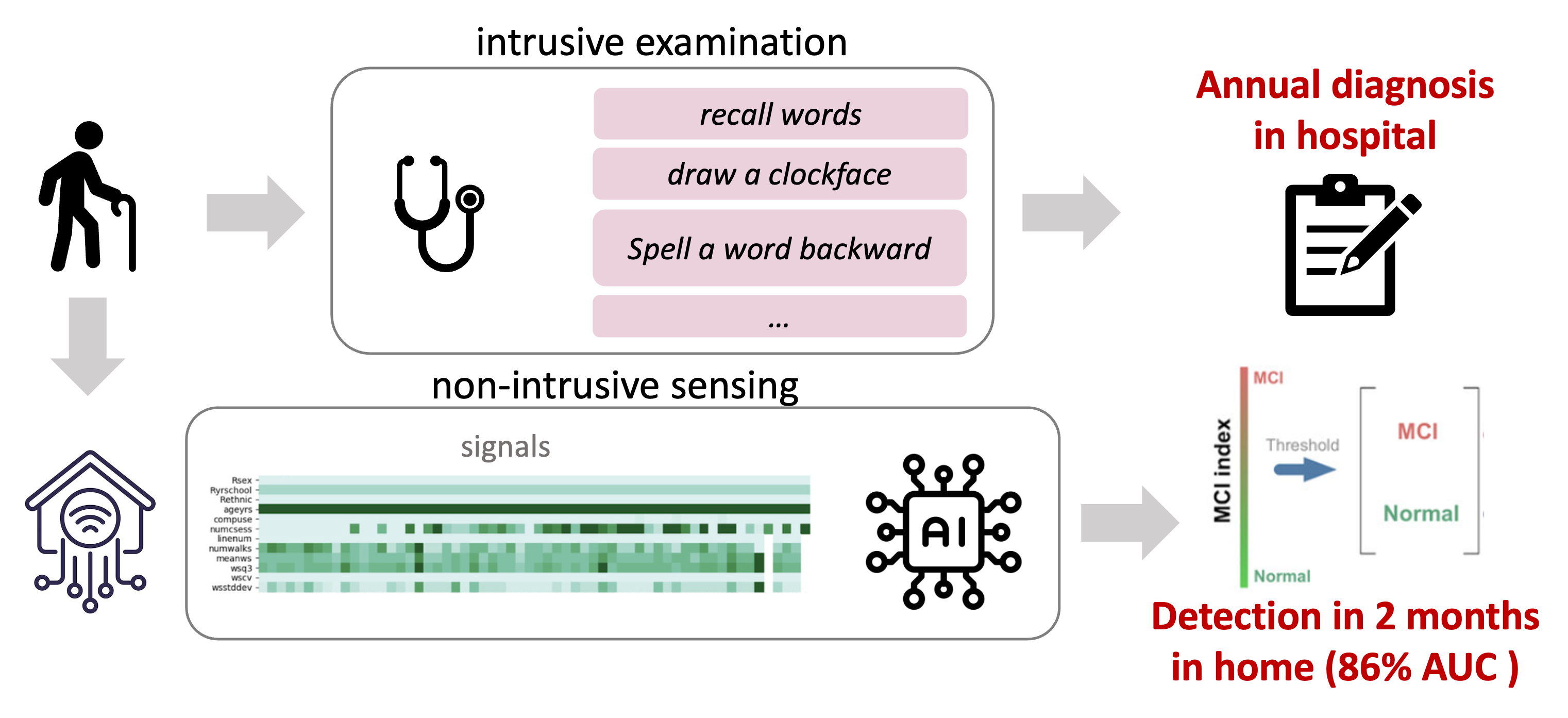

To ground my research in real-world impacts, I am actively exploring applications in healthcare, a domain where trust, privacy, and fairness are paramount. My projects include clinical-protocol-compliant conversational AI for dementia prevention [ICLRW24] and fair, in-home AI-driven early dementia detection [KDD21, AD20]. These initiatives serve as testbeds for responsible AI principles, particularly in ensuring ethical considerations like patient autonomy, data confidentiality, and equitable access to technology, while demonstrating AI’s potential to improve lives.

Publications

PDF 🏁 Competition

PDF Code 🤗 Dataset 🌍 Website

PDF Code 🌍 Website 🏁 Competition 🏆 Best Paper Nomination Finetune Code

PDF 🤗 Models 🌍 Website

PDF Code 👨🏫Tutorial

PDF Website 🤖Demo

PDF Code

PDF Code

PDF Code 🏆 PETs 🏛️ White House

PDF Code

Preprint Code Poster

PDF Poster Slides

PDF Code

Preprint Slides

PDF Code Slides Video

PDF Slides Video Supplementary

PDF DOI

PDF Cite Video DOI Supplementary

Experiences

Experiences

- Assistant Professor, NUS ECE, 2026-

- Research Fellow with Dr. Hiroko H. Dodge, Massachusetts General Hospital & Harvard Medical School, 2025-2026.

- Postdoctoral Fellow with Dr. Zhangyang Wang, VITA group@UT Austin, IFML and WNCG, 2023-2025

- Research Intern with Dr. Lingjuan Lyu, Sony AI, 2022

Media Coverage

- LLM Can Get “Brain Rot”, Nature News, WIRED, Forbes, FORTUNE

- Texas ECE Student and Postdoc Named MLCommons Rising Stars, UT Austin ECE News, 2024

- At Summit for Democracy, the United States and the United Kingdom Announce Winners of Challenge to Drive Innovation in Privacy-enhancing Technologies That Reinforce Democratic Values, The White House, 2023

- Privacy-enhancing Research Earns International Attention, MSU Engineering News, 2023

- Privacy-Enhancing Research Earns International Attention, MSU Office Of Research And Innovation, 2023

Invited Talks & Guest Lectures

- ‘GenAI-Based Chatbot for Early Dementia Intervention’ @ Rising Star Symposium Series, IEEE TCCN Special Interest Group for AI and Machine Learning in Security, September, 2024: [link]

- ‘Building Conversational AI for Affordable and Accessible Early Dementia Intervention’ @ AI Health Course, The School of Information, UT Austin, April, 2024: [paper]

- ‘Shake to Leak: Amplifying the Generative Privacy Risk through Fine-Tuning’ @ Good Systems Symposium: Shaping the Future of Ethical AI, UT Austin, March, 2024: [paper]

- ‘Foundation Models Meet Data Privacy: Risks and Countermeasures’ @ Trustworthy Machine Learning Course, Virginia Tech, Nov, 2023

- ‘Economizing Mild-Cognitive-Impairment Research: Developing a Digital Twin Chatbot from Patient Conversations’ @ BABUŠKA FORUM, Nov, 2023: [link]

- ‘Backdoor Meets Data-Free Learning’ @ Hong Kong Baptist University, Sep, 2023: [slides]

- ‘MECTA: Memory-Economic Continual Test-Time Model Adaptation’ @ Computer Vision Talks, March, 2023: [slides] [video]

- ‘Split-Mix Federated Learning for Model Customization’ @ TrustML Young Scientist Seminars, July, 2022: [link] [video]

- ‘Federated Adversarial Debiasing for Fair and Transferable Representations’, @ CSE Graduate Seminar, Michigan State University, October, 2021: [slides]

- ‘Dynamic Policies on Differential Private Learning’ @ VITA Seminars, UT Austin, Sep, 2020: [slides]

Services

- Organizers: GenAI4Health@NeurIPS 2024-2025 (Lead Chair), The Competition for LLM and Agent Safety 2024, The NeurIPS 2024 LLM Privacy Challenge, FL4Data-Mining Workshop@KDD 2023 (Lead Chair), FedKDD Workshop 2024-2025

- Area Chair: NeurIPS (Top AC, 2025), ICPR

- External Reviewer: NeurIPS (Top Reviewer 2023, Top AC 2025), ICML (Gold Reviewer 2026), ICLR, KDD, ECML-PKDD, AISTATS, WSDM, AISTATS, AAAI, IJCAI, NeuroComputing, TKDD, TKDE, JAIR, TDSC, ACM Health

- Volunteer: KDD

Teaching

- Mentor@VRT-CHAT: Designing Reminiscence-Therapy Chatbots with Culturally-Sensitive Visual Stimulation for Mental Health, RAI4Ukraine Program, Center for Responsible AI at NYU, 2024

- Mentor@A-CONECT: Designing AI-based Conversational Chatbot for Early Dementia Intervention, Directed Reading Program (DiRP), UT Austin, 2024

- Mentor@Directed Reading Program (DiRP) on Trustworthy LLM, UT Austin, 2023

- Teaching Assistant@CSE 847: Machine Learning, MSU, 2021

- Teaching Assistant@CSE 404: Introduction to Machine Learning, MSU, 2020

Mentored Students:

-

Zhangheng Li (2023 - 2025), Ph.D. student, University of Texas at Austin

SaTML 2024, ICML 2024, ICLR 2024

Prior: PKU MS, NJU BS

Now: Research Scientist, Zoom AI -

Runjin Chen (2023 - 2025), Ph.D. student, University of Texas at Austin

NAACL 2025, COLM 2025, Anthropic Fellow Program 2025

Prior: SJTU BS/MS

Now: Anthropic -

Wes Robbins (2024 - 2025), Ph.D. student, University of Texas at Austin

Prior: University of Colorado, Colorado Springs Now: Clearview AI -

Gabriel Jacob Perin (2023 - 2025), Undergraduate student, University of São Paulo, Brazil

EMNLP 2024, COLM 2025

Now: Intern@IBM Research, MS@IME-USP -

Ostap Kilbasovych (2024 - 2025), Undergraduate student, Ivan Franko National University of Lviv, Ukraine

Now: MS@Mundus -

Jeffrey Tan (2023 - 2024), Undergraduate student, University of California, Berkeley

VLDB 2024 (Best Paper Nomination) -

Shuyang Yu (2020 - 2023), Ph.D. student, Michigan State University

ICLR 2024, ICLR 2023 (spotlight), NeurIPSW 2023, ICML 2023, KDD 2021

Prior: Northeastern University (China)

Now: Sumsung -

Haobo Zhang (2022 - 2023), Ph.D. student, Michigan State University

NeurIPS 2023, KDDW 2023

the 3rd place winner at US-UK PETs (Privacy-Enhancing Technologies) Prize Challenge, 2023.

Prior: UESTC BS

Now: Ph.D. student at UMich, intern at Amazon -

Siqi Liang (2022 - 2023), Ph.D. student, Michigan State University

KDDW 2023

Prior: UESTC BS

Now: Ph.D. student at UMich, intern at Amazon